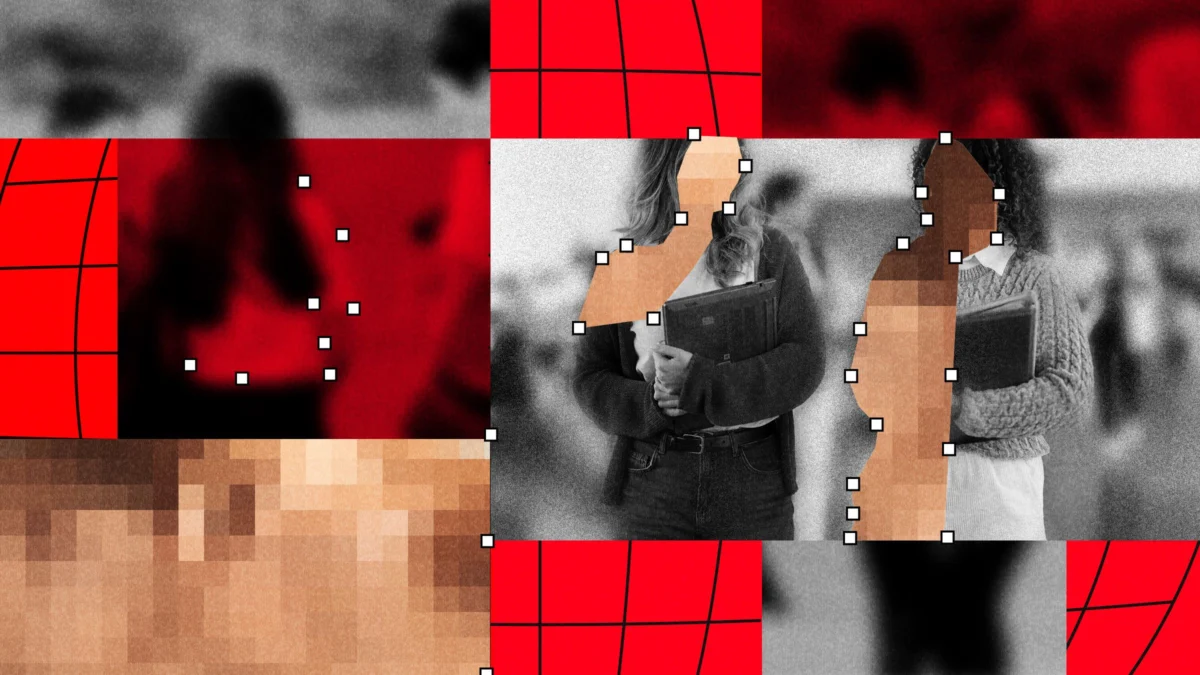

The Global Surge of AI-Generated Deepfake Sexual Abuse in Schools and the Crisis of Institutional Response

The phenomenon typically begins with a benign act: a student downloads a classmate’s photograph from Instagram, Snapchat, or a school yearbook. Within minutes, that image is processed through accessible, low-cost, or free “nudify” applications powered by generative artificial intelligence. The result is a hyper-realistic, nonconsensual explicit image or video that, once circulated, can devastate a student’s social life, psychological well-being, and sense of safety. This digital exploitation, now categorized as a form of child sexual abuse material (CSAM), has evolved from a fringe technological concern into a systemic crisis impacting schools across at least 28 countries.

A Rapidly Expanding Digital Threat

Since 2023, the landscape of digital abuse in educational environments has shifted dramatically. While deepfake technology has been available in various forms since 2017, the democratization of generative AI has removed the primary barrier to entry: technical expertise. Today, any individual with a smartphone and basic internet access can manipulate imagery to commit sexual harassment.

An exhaustive review of global incidents conducted by WIRED and Indicator suggests that this is no longer an isolated or sporadic issue. At least 90 schools worldwide have reported significant incidents of AI-driven sexual abuse, affecting more than 600 pupils. However, researchers emphasize that these figures are likely a massive undercount. Because many schools and law enforcement agencies handle these incidents internally—often to protect the privacy of minors or to avoid institutional scandal—the true scope of the victimization remains obscured. UNICEF’s recent data provides a starker estimate, suggesting that as many as 1.2 million children were the targets of sexual deepfakes globally in the last year alone.

Chronology of the Crisis

The escalation of this crisis can be traced through several stages:

- 2017–2020: The Nascent Stage: Deepfake technology gains public attention, initially focused on the manipulation of celebrity images. Institutional awareness in K-12 settings remains near zero.

- 2021–2022: The Shift to Peer-to-Peer: The technology begins to trickle down into social circles. Reports of “nudify” apps start appearing in digital safety forums, though incidents remain localized and rare.

- 2023: The Proliferation Phase: Generative AI models become widely accessible. The number of reported cases in schools spikes globally. Significant incidents in North America, Europe, and Australia bring the issue to the forefront of educational policy debates.

- 2024–2025: Institutional Reckoning: Schools begin to formalize responses. Some institutions implement bans on yearbook photography or social media posting, while others grapple with the legal complexities of punishing students for creating CSAM.

The Data Behind the Harm

The statistical evidence underscores a pervasive, systemic problem. In Spain, for example, research from Save the Children indicates that one in five young people report being victims of AI deepfakes, with almost all reported instances involving sexual content. Similar findings from the Center for Democracy and Technology reveal that 15 percent of students in the United States are aware of AI-generated imagery involving peers at their specific schools.

The motivation behind these acts is multifaceted. While some instances stem from genuine sexual interest, experts like Amanda Goharian of the child safety group Thorn note that the primary drivers are often social: revenge, peer-group dares, and a desire for social control. This perspective is echoed by Siddharth Pillai of the RATI Foundation, who argues that the AI-driven "glut of content" is fundamentally an issue of power and humiliation. The victims are not just dealing with an image; they are dealing with the permanent, haunting knowledge that their likeness has been violated and could resurface at any moment.

Institutional and Legal Inadequacy

The response from educational and law enforcement bodies has been characterized by inconsistency. In several high-profile cases, parents have expressed outrage over the delayed reactions of school administrators. In one instance, a school took three days to report a clear case of CSAM to law enforcement. In others, victims have reported that the perpetrators faced no significant disciplinary consequences, leaving the victims to bear the burden of the trauma alone.

Legal systems are also struggling to keep pace. While some jurisdictions are treating these cases as felony CSAM production, others are forced to navigate grey areas in statutes that were written long before the advent of generative AI. The sentencing of two Pennsylvania teenagers to community service for creating sexual deepfakes of 60 female classmates represents a milestone in judicial intervention, but legal scholars argue that current frameworks are largely reactive rather than preventative.

Broadening Targets: The Teacher Vulnerability

The crisis is not limited to student-on-student victimization. Educators and staff have increasingly become targets of deepfake technology. There have been multiple documented instances of students using AI to generate explicit or humiliating content depicting their teachers. In Oregon, a school was forced to rely on substitute teachers after regular staff members were targeted by a social media account sharing manipulated images. This trend has created a hostile environment for educators, who find themselves powerless to stop the spread of AI-generated defamation.

The Path Toward Mitigation

As the severity of the situation becomes impossible to ignore, some institutions are taking proactive measures. Schools in Australia and South Korea have moved to restrict the use of student images in public-facing media, opting for silhouettes or distant group shots to mitigate the risk of source material being stolen for "nudification."

Furthermore, educational experts like Evan Harris, founder of the Pathos Consulting Group, advocate for a comprehensive approach to crisis readiness. This includes:

- Digital Literacy Training: Educating students on the legal and moral implications of creating nonconsensual content.

- Forensic Preparedness: Training administrators on how to preserve digital evidence and coordinate with law enforcement.

- Support Systems: Providing victims with the psychological and legal resources necessary to navigate the aftermath of an image leak.

Legislative efforts are also gaining momentum. The "Take It Down Act," which mandates that tech platforms remove nonconsensual intimate images within 48 hours, is a direct response to the pressure exerted by students and advocacy groups who have grown frustrated with the slow pace of change. Similar efforts are underway in the UK and the EU to ban the underlying technologies that facilitate "nudification."

The Societal Implication

Ultimately, the deepfake crisis is a reflection of entrenched gender dynamics and the speed at which technology outpaces policy. Tanya Horeck, a professor of media studies, notes that while the technology is the tool, the underlying issue is the social facilitation of gender-based violence.

The lasting impact on victims—many of whom are minors—is profound. The fear of future exposure, the loss of trust in peers, and the disruption of the educational experience create a cycle of trauma that schools are currently ill-equipped to break. Until there is a synchronized effort between tech developers, policymakers, and educational institutions to implement both technical safeguards and meaningful deterrence, the "nudify" epidemic will continue to haunt the digital lives of the next generation. The challenge for the coming years will be to ensure that the digital world does not remain a place where such violations can occur with impunity, and where schools are transformed from places of learning into sites of digital surveillance and vulnerability.